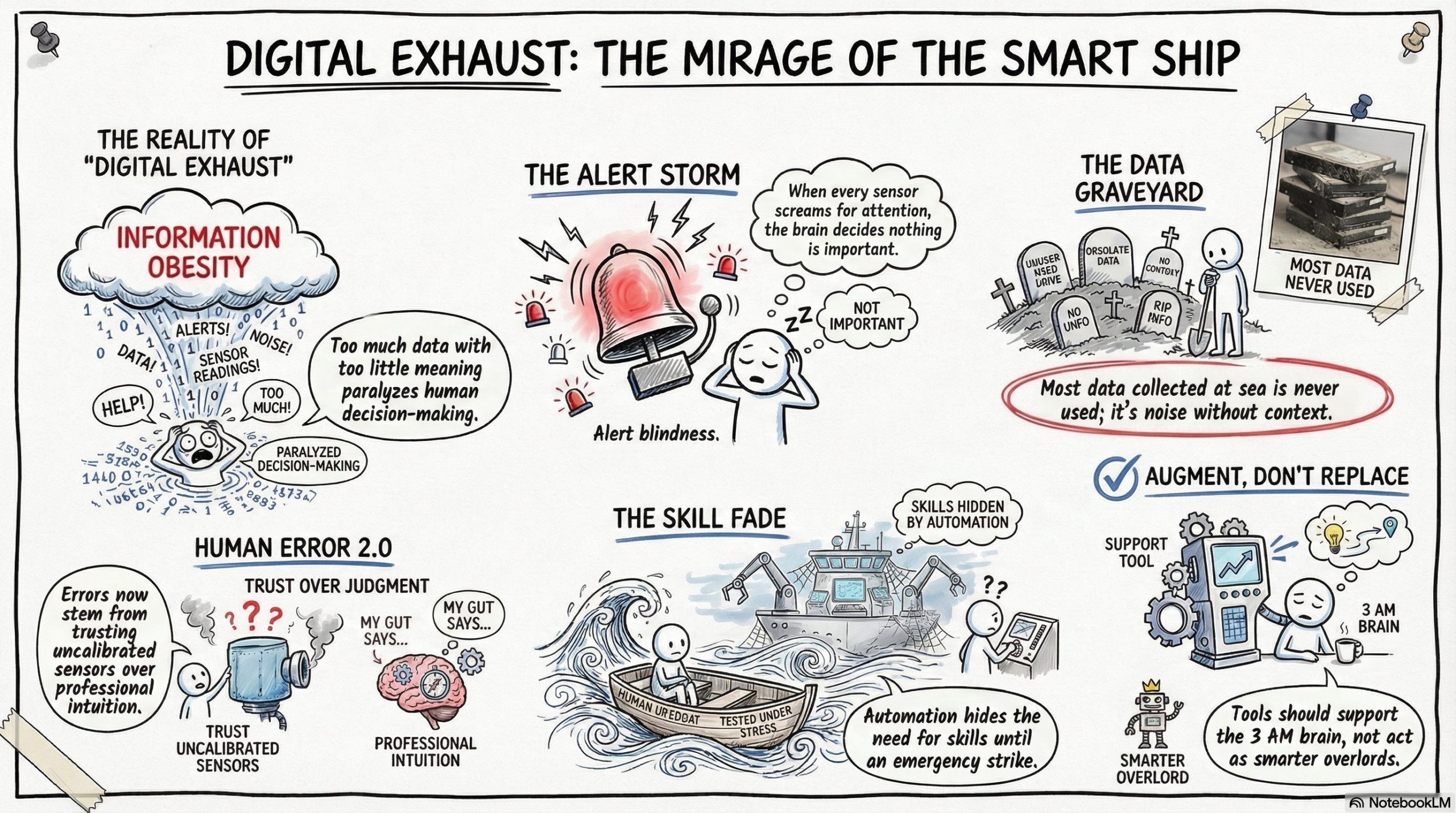

The Digital Delusion (The Tech Mirage) – How Smart Ships Are Creating Dumber Mistakes

Shipping loves the word “smart.”

Smart ships. Smart bridges. Smart data. Smart everything.

We slap “smart” on things like it’s a personality trait. Like the vessel itself is going to wake up one morning, make coffee, and decide to avoid the weather all by itself.

Here’s the thing nobody says out loud:

Most “smart” ships are still held together by people who haven’t slept properly in four months. And those people are now drowning in data.

Welcome to the era of digital exhaust.

The endless stream of numbers, alerts, graphs, and dashboards that look impressive but mostly just… sit there. Exhausting everyone who has to look at them.

The Great Data Illusion

Somewhere along the way, shipping decided that more data = better decisions.

This is adorable. And wrong.

Modern vessels generate enough information to make a server farm sweat. Fuel consumption. Engine vibration. Cargo temperature. Weather routing. Crew heart rate, probably, if someone attached a Fitbit to the induction.

But here’s the problem:

Data without context is just noise with a chart attached.

You don’t steer a ship by staring at the wake. Yet most systems are designed to monitor the past and call it “intelligence.”

Every vendor arrives with beautiful dashboards. Trend lines. Heat maps. Pie charts that somehow multiply overnight.

Ask the person on watch what they actually need, and they’ll say: “Fewer things beeping at me would be nice.”

But that doesn’t sell software.

When “Smart” Just Means Overwhelmed

The “smart bridge” sounds amazing. One screen. Everything integrated. Alerts that actually mean something.

In reality?

The officer on watch is fighting the interface more than the weather.

Every sensor demands attention. Every system insists its alert is the priority. The ECDIS beeps. The radar beeps. The auto-pilot suggests something. The engine telegraph chimes. The satcom terminal wants to know if you’ve seen its latest email.

Before long, the brain does what brains do when everything is important:

It decides nothing is important.

Aviation learned this decades ago. When every alarm screams at once, pilots stop trusting alarms. They call it “alert fatigue.”

Maritime is still living in a room where every fire alarm, smoke detector, and microwave timer goes off simultaneously, and someone wonders why the crew didn’t respond to the really important one.

The Data Graveyard

Here’s an uncomfortable statistic:

Most data collected at sea never gets used.

It sits on servers. In clouds. On hard drives that will one day be recycled by someone who has no idea what any of it means.

A digital graveyard of good intentions.

Why? Because dashboards show what happened. They rarely show why.

Even the smartest algorithm can confuse noise for a trend. Remember that company that announced it cut fuel consumption by 4% using AI? Turned out it was just calm weather that week. The AI took credit. The ocean stayed silent.

Machines measure. Humans interpret.

And interpretation requires something no algorithm has: context.

The Myth of the One Screen to Rule Them All

Vendors love promising the single dashboard. The unified view. The one screen that replaces all screens.

Reality?

A spaghetti junction of incompatible systems. Different logins. Different update cycles. Different ways of saying the same thing badly.

Officers develop “alert blindness.” Engineers go back to paper because paper doesn’t crash. Management wonders why the million-dollar system isn’t delivering million-dollar results.

Meanwhile, the ship keeps floating. The crew keeps coping. The data keeps flowing into the void.

Human Error 2.0: The Upgrade Nobody Wanted

For years, every conference slide deck has declared that technology will “eliminate human error.”

And yet.

Humans remain stubbornly present on ships. Walking. Thinking. Improvising. Ignoring alarms that beep like needy toddlers.

Here’s the truth they don’t put in the brochure:

Human error isn’t disappearing. It’s evolving. And our technology is helping it along.

When Automation Becomes the New Distraction

We’ve all seen it:

The system offers a “recommendation.”

The officer, tired and behind on watch, accepts it.

The recommendation turns out to be… not great.

Everyone is shocked—except the person who actually read the manual and saw the disclaimer in fine print.

As systems get smarter, people are quietly encouraged to get dumber.

Not because they lack skill. Because automation hides the need for it.

This is the new form of human error:

Not a mistake of judgment.

A mistake of trust.

We trusted the machine. The machine trusted the data. The data came from a sensor that hadn’t been calibrated since 2021.

But sure. Blame the human.

Information Obesity

Here’s a thought experiment:

If you give someone too much food, they get physically sick.

If you give someone too much information, what happens to their decision-making?

It doesn’t get better. It gets paralyzed.

Human Error 2.0 isn’t caused by ignorance. It’s caused by too much information with too little meaning.

The brain freezes. The watch continues. The ship drifts three degrees off course before anyone notices.

Not because no one was paying attention.

Because everyone was paying attention to everything.

Automation Isn’t Autonomous

We love the idea of systems that “run themselves.”

Until they don’t.

When something fails at sea—a sensor, a network, a software patch that was rushed out on a Friday afternoon—it always falls back to the human.

And here’s the irony:

The more automation we introduce, the fewer chances humans get to practice the very skills they’re expected to use in emergencies.

We’ve built ships where humans now act like lifeboats:

Rarely used. Desperately needed. Only tested under stress.

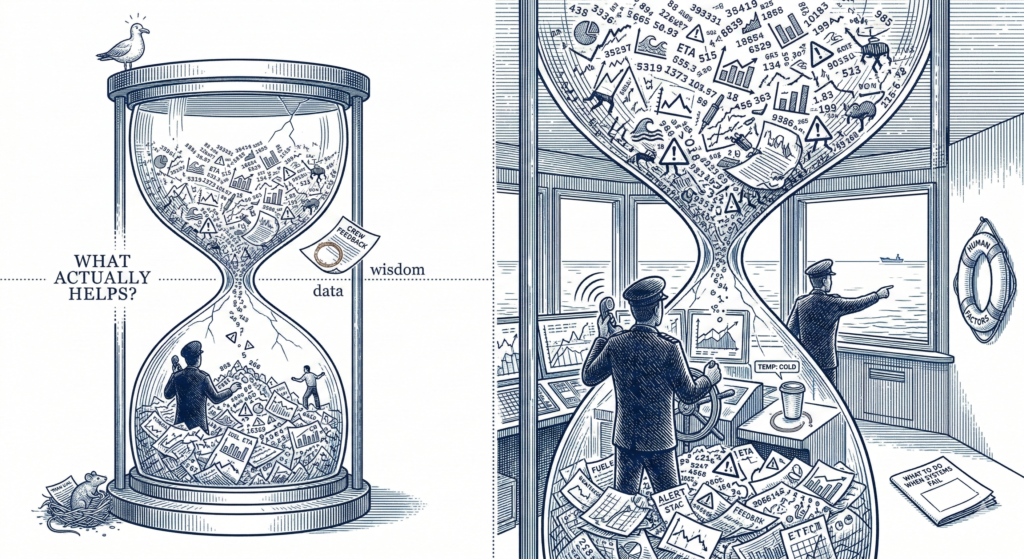

What Aviation Learned (That We Keep Ignoring)

Decades ago, aviation figured something out:

You can’t just pile technology into a cockpit and hope for the best.

You have to design for the human.

- Curate data, don’t just collect it

- Train for failure, not just normal operations

- Build systems that support decisions, not make them

Maritime is still in the “more data = smarter” phase.

We need to move to the useful data phase.

Instead of asking “what else can we measure?” ask:

“What actually helps someone decide?”

The Real Fix (It’s Boring. It Works.)

Build tech that respects the human brain.

The one that:

- Works six-hour watches

- Makes decisions at 3 AM

- Juggles fifteen competing priorities

- Has to interpret your beautiful dashboard while also avoiding fishing boats with no lights

The goal isn’t automation or replacement.

It’s augmentation.

Better tools. Not smarter overlords.

Because at the end of the day:

Data doesn’t save ships.

People using data well do.

A Slightly Uncomfortable Truth

Yes, digital tech is real. Yes, it’s here to stay.

But collecting everything because it feels modern?

That’s just shiny. Not smart.

Until we treat human attention as finite, “smart” ships will just be:

Over-informed. Under-thinking.

And the alarms will keep beeping.

Like needy toddlers.