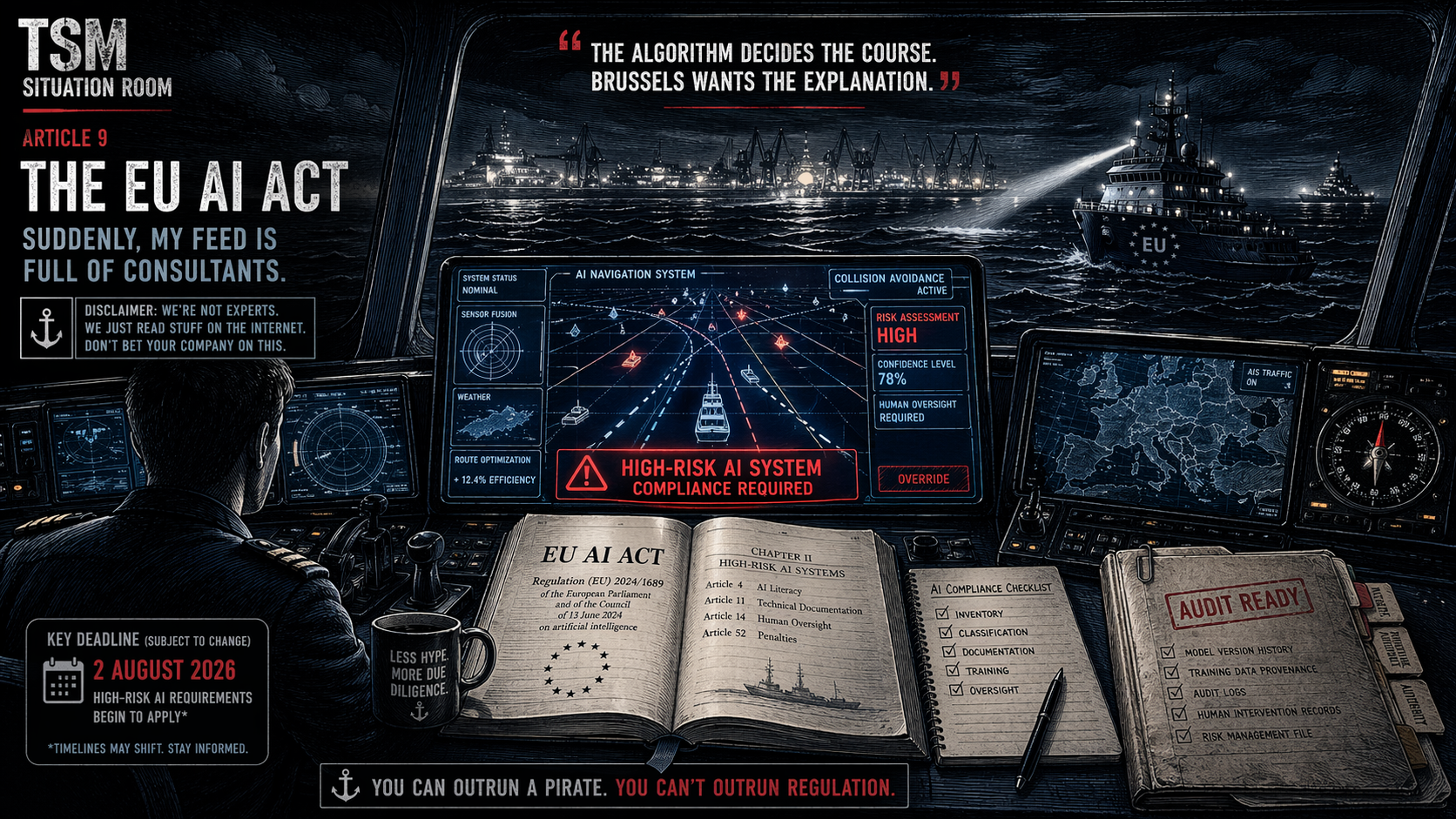

Suddenly, My Feed Is Full of Consultants talking about the EU AI Act Shipping.

Disclaimer: The Sarcastic Mariner(s) are not legal experts, compliance consultants, or AI engineers. We are professional pot-stirrers with an internet connection and a willingness to read boring documents so you don’t have to. The following is based on skimming the internet, doing our own R&D, and trying very hard not to fall asleep. Read with a pinch of salt—preferably the kind that comes from a ship’s stores after six months at sea.

You’re scrolling LinkedIn. A post from a compliance consultant: “The EU AI Act is coming. Are you ready?” Another from a tech bro: “Replayability is now a product feature.” A third from someone who calls themselves an “AI Ethicist” (which, let’s be honest, sounds like a job that didn’t exist a year ago).

Three months ago, your feed was about the Strait of Hormuz. Now it’s about Brussels. What happened?

The EU AI Act happened. And like a well-trained algorithm, LinkedIn knows you’re in shipping—an industry increasingly powered by AI, increasingly reliant on automation, and increasingly unsure what Brussels expects from it.

We checked it out. Here’s what we found. But first, the disclaimer again: we’re not experts. We just read stuff on the internet. Don’t bet your company on this.

Q1: What is the EU AI Act, and why is my LinkedIn feed full of it?

The Non-Expert Says: It’s the world’s first comprehensive AI law. And key deadlines are getting close.

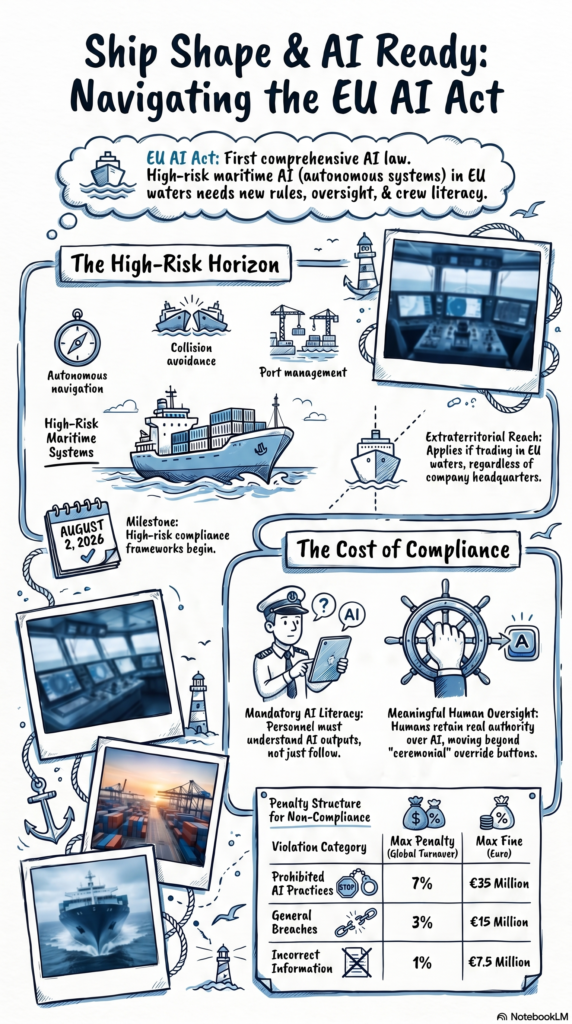

The EU AI Act is legislation passed by the European Union to regulate artificial intelligence. It entered into force in August 2024. For many organizations, August 2, 2026 is a key milestone, when significant parts of the framework—particularly around high-risk AI systems—begin to apply.

But here’s where it gets interesting: there have been discussions and proposals (including late 2025 policy packages) that could adjust or phase certain compliance timelines, particularly for high-risk systems. The exact implementation path is still evolving.

So yes—the deadline is approaching. But the fine print is still being written. Welcome to EU regulation: structured, detailed, and occasionally… fluid.

The TSM Take: The consultants need content. The regulators need precision. And your feed needs something new to panic about. The cycle continues.

Q2: How does this affect shipping? We move boxes, not code.

The Non-Expert Says: You move boxes. But increasingly, code decides how.

Under the EU AI Act, AI systems used in critical infrastructure, including transport, can fall into the high-risk category. That may include:

- Autonomous or decision-support navigation systems

- Collision avoidance algorithms

- AI-assisted port or traffic management systems

If your vessel uses AI for route optimization, engine performance, or predictive maintenance—and operates in or interacts with EU systems—you may fall within scope.

There is growing academic and regulatory consensus that advanced navigation and decision-support systems could be treated as high-risk, depending on their level of autonomy and safety impact.

The TSM Take: The algorithm deciding a course correction in heavy traffic? High-risk.

The one deciding your LinkedIn feed? Questionable, but somehow unavoidable.

Q3: What does the Act actually require? In plain English, please.

The Non-Expert Says: Documentation, oversight, and making sure humans remain… human.

From what we’ve gathered, key requirements for high-risk systems include:

- AI Literacy (Article 4):

Already in effect. Organizations must ensure personnel interacting with AI systems have an appropriate level of understanding. Not coding—but enough to question outputs. - Human Oversight (Article 14):

Systems must allow meaningful human intervention. In maritime terms: the bridge must retain real authority—not just ceremonial override buttons. - Technical Documentation (Article 11):

Detailed records of how the system works—model logic, training data sources, performance logs. This goes well beyond a user manual.

The TSM Take:

Remember when training meant “here’s the muster station”?

Now it’s “here’s why the algorithm thinks this is a good idea.”

Progress—with footnotes.

Q4: Does this apply to non-EU shipping companies?

The Non-Expert Says: Yes. Geography is no longer a shield.

The Act has extraterritorial reach. It can apply if:

- AI systems are used within the EU

- Outputs affect EU-based users or operations

- Services interface with EU infrastructure or clients

So if your vessel trades in EU waters, interacts with EU ports, or uses systems tied to EU operations—you may be in scope.

The TSM Take:

You don’t need to be based in Brussels.

You just need to pass by.

Q5: What are the penalties if we ignore this?

The Non-Expert Says: Significant enough to get attention at board level.

The EU AI Act includes tiered penalties:

- Prohibited practices: up to €35 million or 7% of global turnover

- Other breaches: up to €15 million or 3%

- Incorrect information: up to €7.5 million or 1%

Exact application will depend on enforcement and interpretation—but the intent is clear: this is not symbolic regulation.

The TSM Take:

This is where compliance stops being theoretical.

Percentages of global turnover tend to focus the mind.

Q6: What should shipping companies be doing right now?

The Non-Expert Says: Start practical. Start early.

Based on emerging compliance guidance:

- Map your AI systems

Identify where AI is actually used—onboard and ashore. - Assess risk realistically

If it affects safety or decision-making, assume scrutiny. - Build documentation early

Retrofitting compliance is always more painful than designing for it. - Train operational teams

AI literacy is no longer optional—and not just for IT departments.

The TSM Take:

The winners won’t be the ones who panic first.

They’ll be the ones who quietly started last year.

TSM Situation Room Takeaway

The EU AI Act is not a passing trend. It’s a structural shift in how technology is governed—especially in safety-critical industries like shipping.

Timelines may evolve. Guidance will mature. Grey areas will remain.

But the direction is clear:

AI systems will need to be explainable, auditable, and overseen by humans who understand them.

The companies that adapt early will build resilience—and credibility.

The ones that wait may discover that compliance is not something you can rush.

The TSM Final Word:

Your LinkedIn feed isn’t wrong.

The regulators are moving.

The consultants are active.

And somewhere on your bridge, a system is making decisions that—soon enough—someone may ask you to explain.

Are you ready?

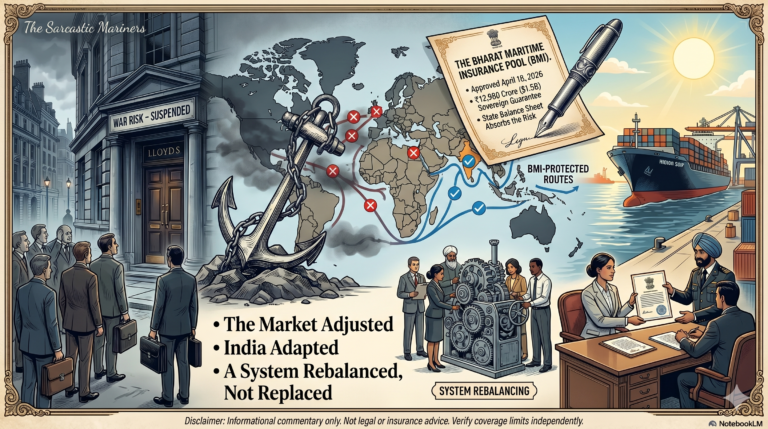

This is part of the Situation Room series. Read [#0 — What We Talk About], [#1 — 3,200 Ships, One Doorway], [#2 — The Strait Closed], [#3 — Hormuz Questions], [#4 — One Month Adrift], [#5 — Strait of Chaos], [7 – India’s Sovereign Play Against the P&I System]